AI Tools

-

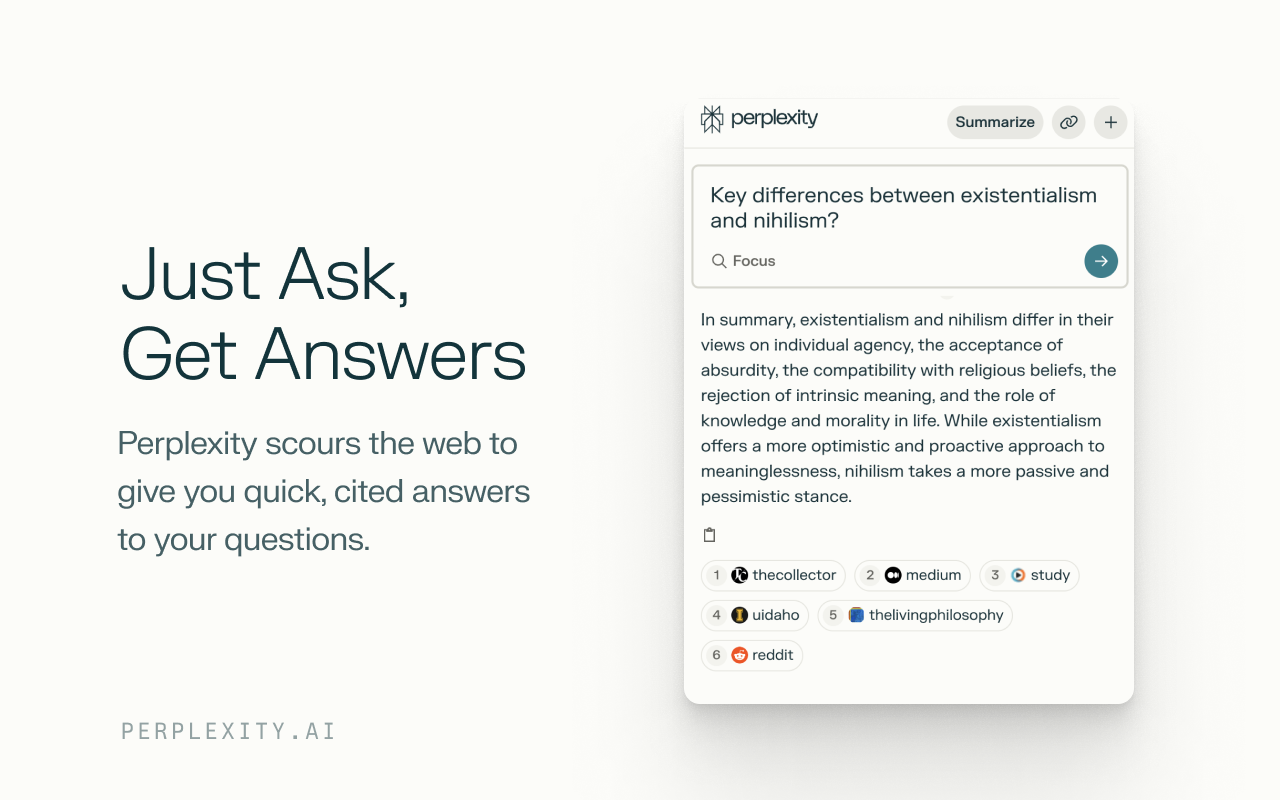

Making Money While Sharing Great AI Tools: My Experience with Perplexity’s Refer-a-Friend Program

I’ve been using Perplexity for a while now, and honestly, it’s become one of my go-to AI tools. So when I discovered they launched a refer-a-friend program that actually pays real money, I knew I had to share this with you all. Here’s everything you need to know about how it works and why it…

-

Sora 2: How OpenAI’s Latest AI Video Update is Redefining Creativity

Remember when AI-generated videos looked like fever dreams? Those days are officially over. OpenAI just dropped Sora 2, and the internet is losing its collective mind—and for good reason. This isn’t just another incremental update; it’s a seismic shift in how we think about creating video content. Within five days of launch, Sora 2 hit…

-

How Lingo.dev Makes App Localization Fast and Easy for Developers

If you’ve ever tried making your app multilingual, you know the grind: extract text, send to translators, wait, merge, fix layout issues, repeat. It’s a slow, error-prone cycle — especially when your product evolves fast. That’s why when I came across Lingo.dev, I got curious. Could a developer tool really automate localization end-to-end, without hand-wrangling…